On 10 July 2025, the ELIAS, ELLIOT, and ELSA projects co-organised a Theme Development Workshop (TDW) focused on Foundation Models in Thessaloniki, Greece. The event was hosted by the Information Technologies Institute of the Centre for Research and Technology Hellas (CERTH). The hybrid-format workshop brought together over 90 in-person participants and more than 120 online attendees, including AI researchers, industry professionals, and students. The four-hour event featured three thematic sessions, each spotlighting cutting-edge research, real-world applications, and critical discussions around the development and deployment of foundation models.

While the global narrative around Foundation Models—powerful, resource-intensive AI systems such as GPT-4 and DALL·E—has largely centred on technology giants, this workshop highlighted Europe’s growing momentum in developing open, community-driven alternatives, with notable advances in training, applications, and security.

A European Perspective on Foundation Models

The event commenced with remarks from Elisa Ricci (University of Trento & Fondazione Bruno Kessler), who outlined the workshop’s objectives: to explore the technical, societal, and application-related aspects of Foundation Models—large-scale AI systems that generalise across tasks and domains. Ricci emphasised the importance of European collaboration, public supercomputing infrastructure, open science, and inclusive design in shaping the future of these models.

European grassroots efforts are thriving. Projects such as LAION and EleutherAI demons

Session 1: Training Foundation Models

Jenia Jitsev (Jülich Supercomputing Centre & LAION) delivered a keynote on scaling laws and generalisation in open foundation models. He emphasised the importance of reproducible scaling laws to predict performance, compare learning procedures and systematically search for learning with stronger generalisation and transferability, highlighting work on OpenCLIP, Re-LAION, openMaMMUT and OpenThinker. A key message was that academics can go surprisingly far and reach up to so-called hyperscaler closed labs in the industry with access to vast public supercomputing resources, strong ideas, and a collaborative, transparent open-source spirit – a compelling case for providing further increased support to open, academic AI efforts.

Frank Hutter (Prior Labs & ELLIS Institute Tübingen) introduced recent innovations in Tabular Foundation Models, a domain often overlooked in mainstream deep learning. He showcased TabPFN and TabPFN v2, which outperform traditional machine learning approaches such as gradient-boosted trees in sectors like finance and healthcare. Hutter demonstrated how synthetic data generation can enable powerful pretraining, proving that domain-specific foundation models have broad potential beyond NLP and vision.

Cees Snoek (University of Amsterdam) presented NeoBabel, a multilingual foundation model for image generation that natively understands six languages: English, Chinese, Dutch, French, Hindi, and Persian. NeoBabel tackles a major challenge: multilingual multimodal data is scarce. The team enhanced an English-only dataset using LLMs for translation and detailed recaptioning, thereby bootstrapping a multilingual dataset from scratch. The model is fully open-source, offering not only checkpoints but also a curated dataset and an extensible toolkit for reproducible research.

📄 NeoBabel paper: arXiv link

Session 2: Ethical & Safe Foundation Models

Chaired by Lorenzo Baraldi (University of Modena and Reggio Emilia), the second session explored the security and societal risks associated with foundation models.

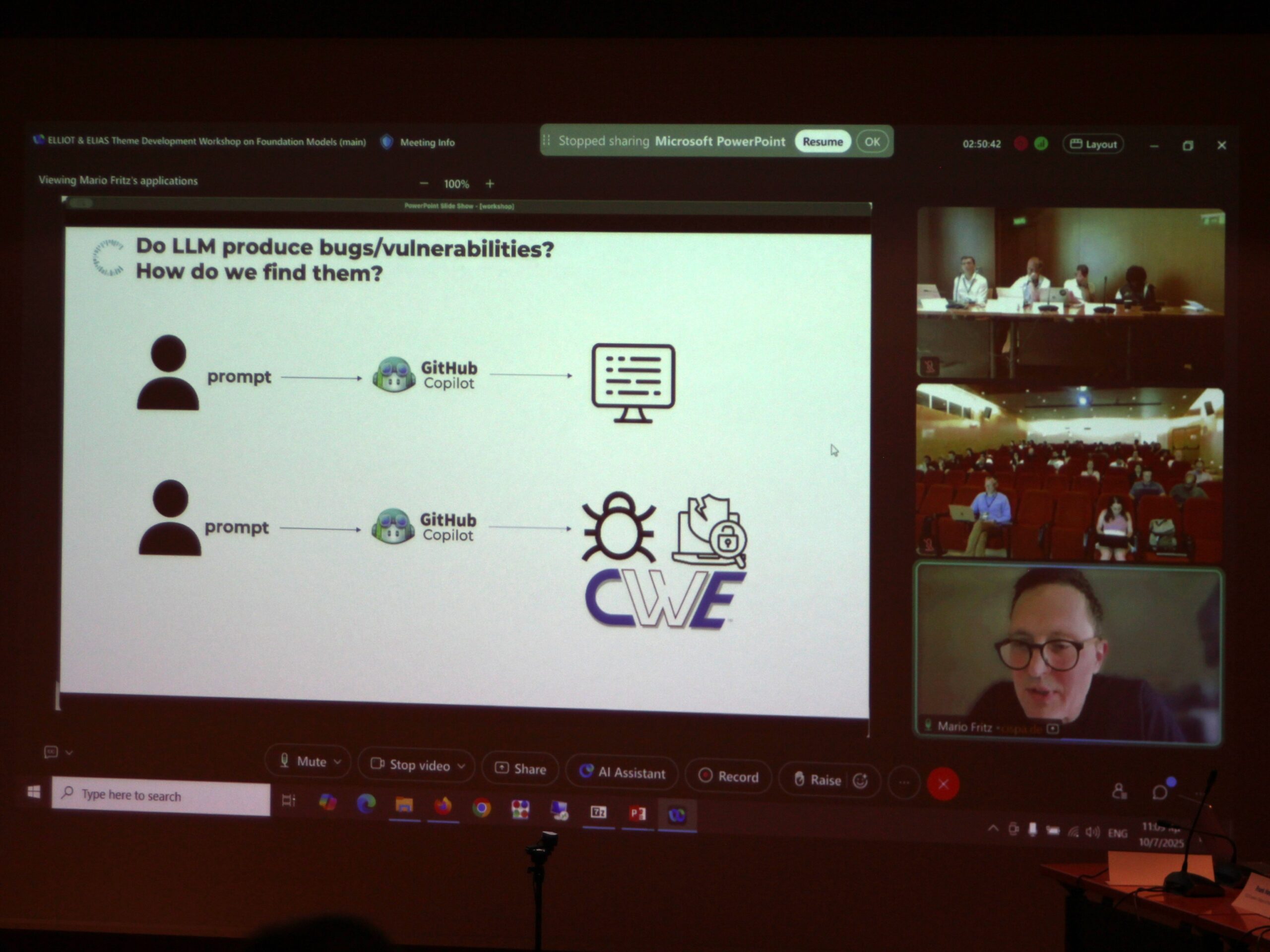

Mario Fritz (CISPA Helmholtz Center for Information Security) delivered a comprehensive talk on the security and safety landscape, covering issues such as prompt injection attacks, trust in code-generation models, and the dynamics of agent-based negotiation and collaboration. He explored how foundation models can amplify both risks and opportunities, stressing the need for transparent alignment strategies and automated red teaming.

Session 3: Applications of Foundation Models

Moderated by Dimosthenis Karatzas (Computer Vision Centre – CVC-CERCA & Autonomous University of Barcelona), this session showcased real-world applications of foundation models in robotics, video understanding, and industrial domains.

Marc Pollefeys (Microsoft & ETH Zurich) provided a comprehensive overview of Spatial AI foundation models in 3D environments. His talk ranged from robot manipulation to world models for autonomous driving, highlighting progress on the recent GEM models and their application in real-world robotics and perception.

Matthieu Cord (University of Sorbonne & VALEO) presented innovations in generative video pretraining through the VaVIM–VaVAM models. By reducing token counts and shifting from discrete to continuous tokens, the team achieved significant gains in both training speed and performance, pushing the boundaries of video foundation models for automotive and control systems.

Dario Garcia-Gasulla (Barcelona Supercomputing Centre) concluded the session with an in-depth look at training and evaluating LLMs using European HPC resources. He addressed post-training techniques, emphasising the importance of robust evaluation benchmarks, especially in regulated domains such as healthcare, chip design, and secure code generation. Garcia-Casulla also highlighted the increasing role of European AI Factories and Gigafactories in providing accessible compute and sovereign infrastructure for open AI development.

Building a Collaborative Future

Interactive Q&A segments followed each talk, with discussions centred on different topics. The event concluded with a wrap-up session led by Ricci, Karatzas, and Baraldi, who emphasised the importance of cross-sectoral collaboration to ensure that foundation models are developed in ways that are safe, inclusive, and aligned with European values.

Key takeaways from participants included:

- Support for open, multilingual, and multimodal models

- Investment in European computing infrastructure to level the playing field

- Stronger integration of ethics, regulation, and societal perspectives in AI development

- The value of synthetic data and innovative training techniques to democratise access

The workshop fostered vibrant discussion and provided valuable networking opportunities, culminating in a light lunch where participants continued to exchange ideas informally.

Watch the event recording here!

Looking Ahead

This TDW marked the second in a series of thematic workshops organised by ELIAS, in collaboration with the ELLIOT and ELSA networks. It sent a clear message that Europe is not merely observing the foundation model revolution—it is actively shaping it.

Despite ongoing challenges in data availability and computational resources, Europe’s commitment to open models, responsible design, and regional relevance is yielding tangible results. Initiatives like Laion, NeoBabel, OpenCLIP, and AI Factories illustrate that a distributed, democratic AI future is not only possible—it is already underway.

This workshop was jointly organised by the ELIAS , ELLIOT & ELSA projects.

European Large Open Multi-Modal Foundation Models For Robust Generalization On Arbitrary Data Streams – ELLIOT (GA No. 101214398 ) aims to enhance general-purpose AI by developing large-scale, open multimodal foundation models with strong spatio-temporal understanding. Led by top European academic and industrial labs from the ELLIS and LAION communities, the project targets underrepresented time-relevant modalities such as industrial time series, remote sensing, and health data. Both real and synthetic data will be used, sourced from consortium partners and European Data Spaces, with synthetic data generated using current and novel generative AI methods. European HPC resources are integrated to support large-scale model training.

European Lighthouse on Secure and Safe AI – ELSA (GA No. 101070617) is a growing network of excellence that spearheads efforts in foundational safe and secure AI methodology research. ELSA’s founding members include European experts in all aspects of safe and secure AI, with particular focus on technical robustness, privacy preserving techniques and human agency and oversight. In addition, ELSA brings on board research and industry experts in six different sectors that are key application areas of safe and secure AI. ELSA builds on and extends the internationally recognised and excellently positioned ELLIS (European Laboratory for Learning and Intelligent Systems) network of excellence.